Spec-Driven Development Doesn't Fix the Requirements Problem

Every few years, a new methodology arrives promising to solve software's oldest problem: nobody can ever quite say what they want.

Waterfall promised it. Agile promised it. DDD promised it. TDD and BDD promised it. Each was pitched, in some form, as the discipline that would finally make requirements complete and unambiguous. None of them did, because none of them attacked the actual cause. And now, Spec-Driven Development is following the same pattern. It's worth being precise about what it does and does not deliver before another organization commits to it as a transformation.

This is not a takedown of SDD. SDD adds something real. But the gap between what it adds and what it is being sold as is large, and the cost of that gap is teams adopting yet another half-fix, watching it kind-of-sort-of work, and concluding that the requirements problem is just unsolvable. It is not unsolvable. It is just being attacked at the wrong layer, again.

Want better code? Use better specs and a more precise language. The compiler can only catch what the requirement actually said, and most requirements do not say much.

- Spec-Driven Development is the newest methodology pitched as fixing the requirements problem. Like every methodology before it, it relocates the problem rather than solving it. Vague requirements still produce vague systems; SDD just stores the vagueness in markdown.

- EARS, five sentence patterns for writing requirements, attacks the problem where it lives: at the sentence. Combined with explicit scope statements and a multi-model LLM gap-analysis pass, it produces specs that are 80% complete before a domain expert ever sees them.

- This is the same argument we made about Scala types, one layer up. Precise specs drive precise type signatures drive precise compiler-validated code. The disciplines stack. SDD is not the multiplier. The stack is.

What Spec-Driven Development Is (And Isn't)

The honest description of SDD: the durable artifact of value moves from code to specification. AI generates the implementation and engineers shift toward editing generated output. The spec becomes versioned, persistent, and in theory the source of truth. Iteration gets cheaper because regenerating implementation from a revised spec is faster than rewriting code by hand.

The marginal contribution that is new is the coupling between spec and generated code. When a Jira ticket is vague, an engineer fills in the missing parts using judgment, the gap gets silently encoded in the implementation, and the original ticket never gets fixed. When a vague spec drives generation, the gap shows up in the output more directly. That visibility is a forcing function on spec quality. It is a real benefit.

It is also a narrow one. SDD does not change whether requirements are complete. It changes how quickly incompleteness becomes visible. A team that writes vague specs will now get vague generated code faster than they used to get vague hand-written code. The methodology is value-neutral on the upstream problem.

This is the part that gets glossed over in the marketing. SDD relocates where the requirements problem lives; it does not solve it. Treating relocation as a solution is how organizations end up with versioned, beautifully-stored, AI-consumable requirements that are still ambiguous prose, and then express surprise when the generated systems do not behave correctly.

Where the Requirements Problem Lives

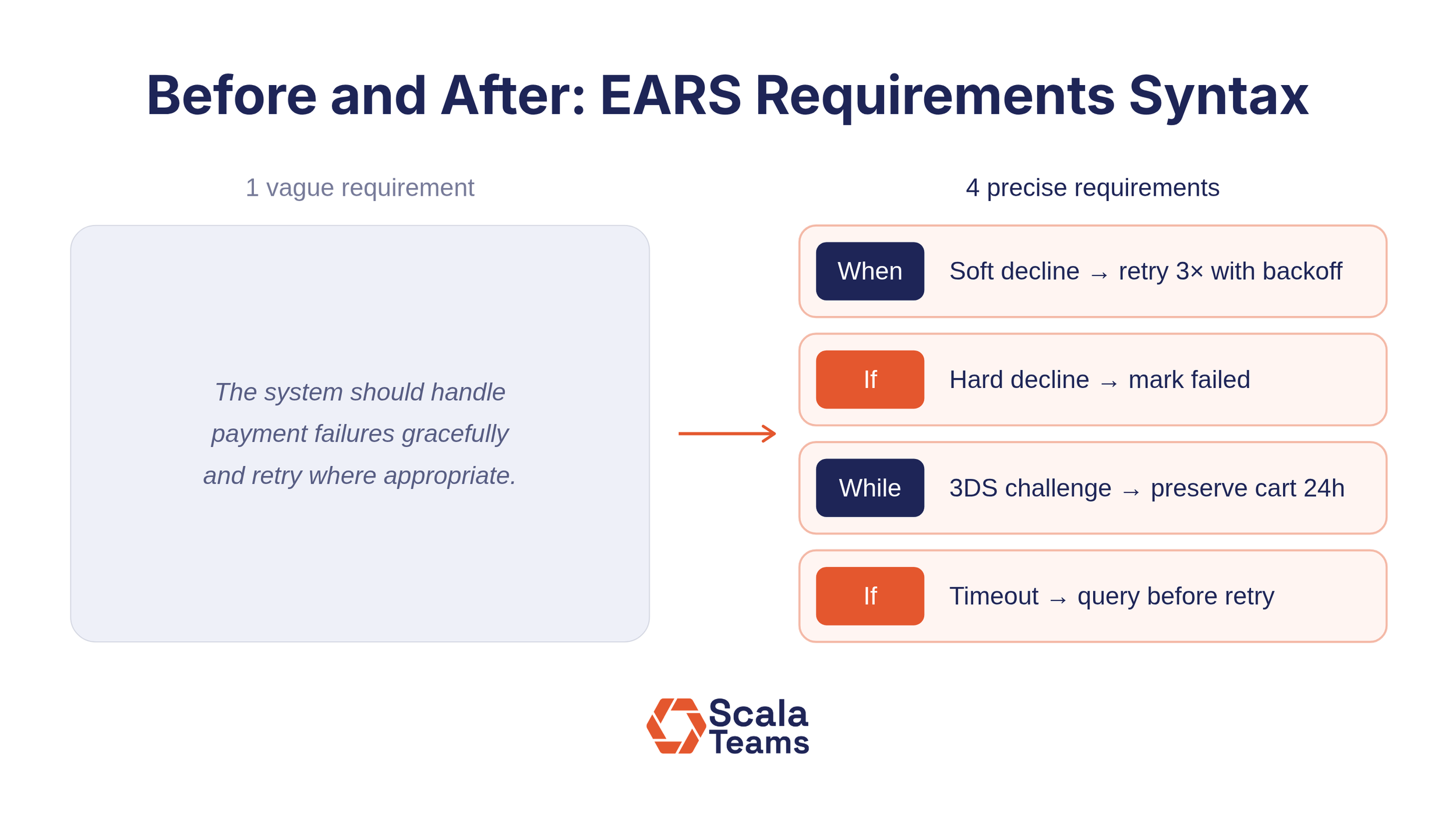

Requirements are incomplete because humans do not know what they want, do not know what they do not know, and do not enumerate failure modes. They write sentences like "the system should handle payment failures gracefully and retry where appropriate" and consider the work done. That sentence has one verb and infinite valid interpretations.

No methodology will fix that sentence by storing it in a different place. The sentence is the problem. The discipline that fixes it has to operate at the level of the sentence itself.

That discipline exists. It is not new, it is not exciting, and it is not being sold as a transformation. It is called EARS, Easy Approach to Requirements Syntax, and it is a writing standard developed at Rolls-Royce in the late 2000s. EARS is not a methodology. It is five sentence patterns:

- Ubiquitous: The system shall do X.

- Event-driven: When X occurs, the system shall do Y.

- State-driven: While X is true, the system shall do Y.

- Optional feature: Where X is included, the system shall do Y.

- Unwanted behavior: If X occurs, then the system shall do Y.

That is the entire system. It is teachable in an afternoon. It works in any tool like Jira, Confluence, markdown, Notion, the back of a napkin. And it attacks the requirements problem where the problem actually lives: at the sentence.

If EARS is this effective, why isn't it already standard practice? Because it cannot be packaged and sold. There is no certification program, no consulting ecosystem, no transformation roadmap to justify a budget line. It is a writing standard, which means adopting it requires discipline rather than a purchase order. SDD, by contrast, gives organizations a named process to announce and a framework to install. The market consistently rewards methodologies that can be pitched over tools that just work. That is not a knock on the people buying SDD; it is an observation about how the software industry procures solutions to thinking problems.

What Better Requirements Look Like

Here is the original wish, the kind of "requirement" that ends up in tickets every day:

The system should handle payment failures gracefully and retry where appropriate.

Here is the same intent, written in EARS:

When the payment gateway returns a soft decline (network error, issuer timeout, or temporary processing failure), the payment service shall retry the transaction up to three times with exponential backoff starting at two seconds.

If the payment gateway returns a hard decline (insufficient funds, fraud, lost or stolen card), then the payment service shall mark the transaction as failed and present a customer-friendly message mapped from the gateway's decline code.

While a transaction is in 3DS challenge state, the payment service shall preserve the cart contents for at least 24 hours.

If the network connection to the gateway times out during authorization, then the payment service shall not assume failure; the payment service shall query the transaction status using the original idempotency key before retrying or surfacing a result to the customer.

The change is not cosmetic. The original sentence forced no thinking. The EARS version forces the writer to distinguish soft from hard declines (different retry semantics), to specify a timing constraint on cart preservation during 3DS (a real customer-experience trap if forgotten), and to handle the most subtle failure mode in payment processing: the network timeout, where you cannot tell whether the transaction succeeded and incorrect handling produces double charges. That last one is the one that ends up in postmortems.

The "If/then" pattern does the most work here. It forces the writer to enumerate failure modes in the same sentence-shape as happy paths. That is exactly where requirements quality typically collapses, because writers default to describing what should happen and leave what could go wrong implicit. EARS does not let you do that.

EARS is not a methodology. It is a syntactic forcing function that makes underspecification visible at the sentence level. That is a smaller claim than SDD makes, and it delivers more value, because it operates at the layer where the problem actually exists.

How LLMs Amplify Structured Requirements

The combination that makes this approach unreasonably effective is structured requirements plus an LLM gap-analysis pass.

Once requirements are in EARS, you can ask a model: what is missing relative to a system of this type? The structure of the document gives the model an enumerable surface to compare against its training data. It can perform something like coverage analysis, which triggers are present, which states are addressed, which unwanted behaviors are handled, and pattern-match the gaps against the universe of similar systems it has seen.

Run an EARS payment-processing spec through a frontier model and ask for completeness review, and the output is not generic. It is pointed. It identifies missing requirements around webhook failure-rate circuit breakers, refund timeline customer messaging, explicit PCI SAQ-A declaration, test-mode-key-in-production isolation, and payouts-and-bank reconciliation (not just API-level reconciliation). Those are real production concerns that show up in real postmortems.

Ask the same model to review the original wish, "the system should handle payment failures gracefully and retry where appropriate", and you get vague platitudes about error handling. The model has nothing to anchor against. The structure of EARS is what makes the gap analysis tractable.

This is the multiplier nobody is selling. It is not a platform. It is not a vendor. It is a writing standard plus a feedback loop, and it produces results that a few years ago would have required pulling a senior engineer off another project for an afternoon.

The Scope Trap That Breaks LLM-Assisted Requirements Review

Here is where the workflow runs into a problem that will never appear in an SDD pitch deck.

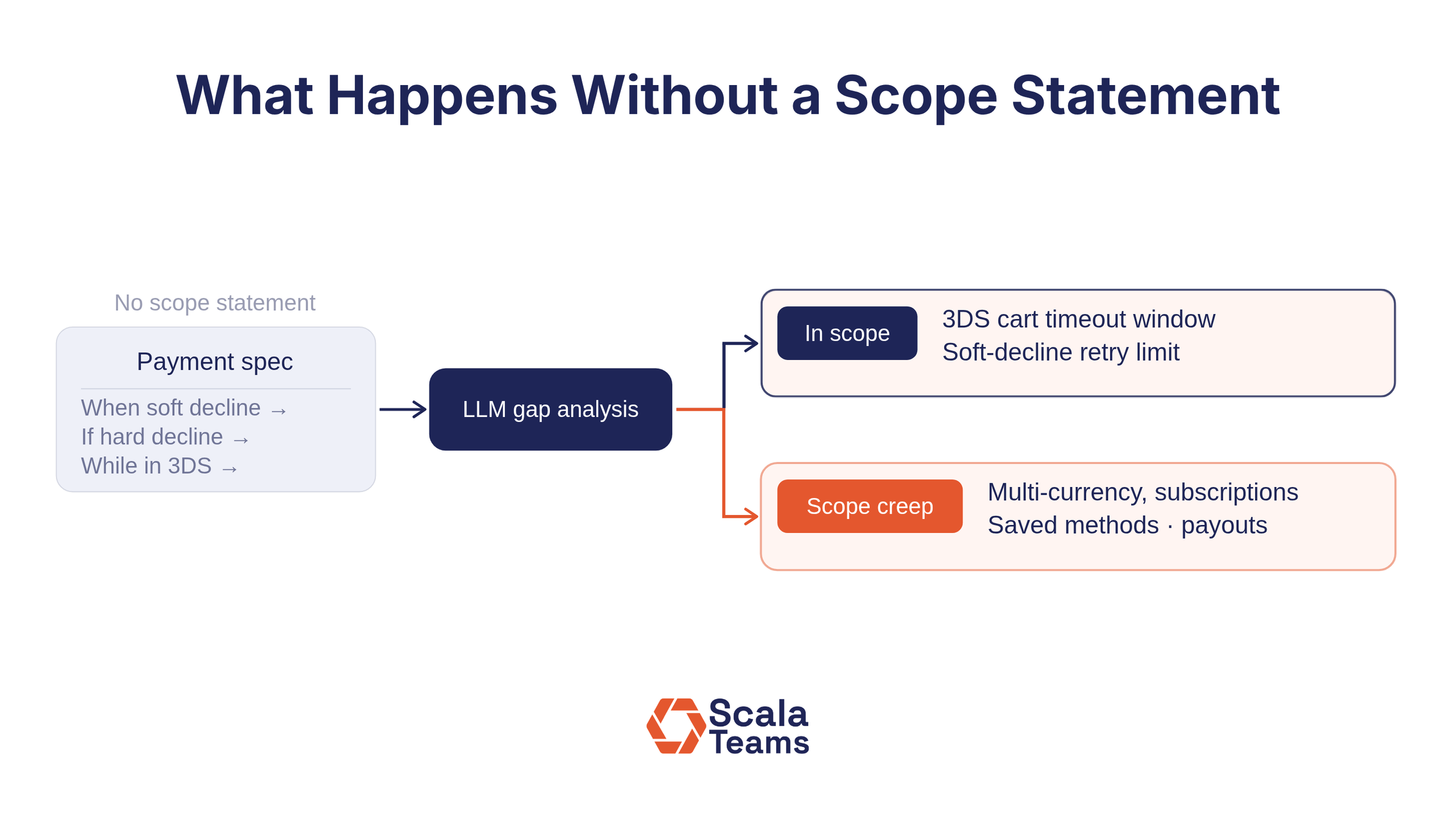

When the example payment spec was generated from the prompt "a simple payment processing system that integrates with Stripe," the word simple did all of the unstated scope work. The spec drifted toward what the model thought was a reasonable interpretation of simple. The gap analysis then drifted toward what the model thought a complete production payment system looked like. Two different LLMs make two different choices about what simple means, and when the same EARS spec is reviewed by a second model the gap list overlaps only partially with the first.

Half the gaps surfaced in the example, multi-currency, subscriptions, account updater, payouts-to-bank reconciliation, dissolve under an explicit scope statement:

This system shall support one-time, single-currency (USD) credit card transactions originating from a US-based ecommerce checkout. Recurring billing, subscriptions, multi-currency, saved payment methods, and payout reconciliation are out of scope for this version.

Without that scope statement, the model is free to compare your spec against an arbitrarily large reference architecture inside its head. With the statement, the gap analysis becomes laser-focused: what is missing relative to the stated scope?

This is the failure mode that scales badly as teams adopt LLM-assisted requirements review. A junior PM runs the gap pass, receives a list of "gaps," and starts addressing them all, expanding scope under the guise of completing requirements. The model is, in effect, negotiating scope by enumeration. The spec grows to cover features that were never agreed to, never budgeted for, and never the goal. By the time someone notices, the system being specified is twice the size of the system actually being built.

Scope statements are the requirements that say what the system is not, and they are the most consistently omitted category in the industry. Most underspecification disasters are scope failures, not feature failures: somebody assumed something was in or out of scope, and the assumption never got written down.

How to run LLM gap analysis without expanding scope

The corrected workflow puts scope first:

- Write the scope explicitly, in EARS-style ubiquitous and "shall not" statements. Be explicit about what is in. Be more explicit about what is out.

- Write functional requirements in EARS within that scope.

- Run the LLM gap analysis with the scope statements as part of the input. Prompt the model to flag gaps only relative to the stated scope, and to flag any suggestion that would expand scope as a separate, scope-change category requiring approval.

- Repeat with a second and third model. Different models catch different things, but only if the scope is pinned. Otherwise, each model invents its own reference architecture, and the lists diverge into noise.

- Bring the merged result to a domain expert for the final review. They are now reviewing an 80%-complete spec instead of a 40%-complete one. That is the difference between a useful expert review and a frustrating one.

Skip step one, and the rest of the workflow degrades quickly. Pin the scope, and the workflow holds up.

Why EARS and Scala's Type System Are Solving the Same Problem

If this argument feels familiar, it should. We made it before, about Scala.

A previous post on this blog argued that Scala is the best backend language for AI-assisted development specifically because of properties that look identical in shape to what we have been describing here: strict types, pure functions, explicit effect tracking, sealed traits that force exhaustive case handling. The claim was that those properties, long sold to humans on the basis of testability and correctness, quietly turn out to give AI a tractable, machine-readable structure to reason against. The signature is the spec. The compiler is the reviewer. The ambiguity that produces hallucinations in loosely-typed code disappears when the type system makes the implicit explicit.

EARS is the same argument, one layer up.

What strict types do for code, EARS does for requirements. What sealed traits and pattern-match exhaustiveness do for case handling, the EARS unwanted-behavior pattern does for failure-mode enumeration. What IO[Error, Result] does for declaring dependencies and failure channels, "When X, the system shall Y / If failure-X, then Z" does for declaring triggers and unwanted behaviors. What the Scala compiler does as an automated reviewer of every AI-generated change, the LLM gap-analysis pass does as an automated reviewer of every requirements revision.

Different layers, same discipline: precision at the structural level, applied where ambiguity hurts most, augmented by an automated reviewer that runs on every iteration.

This is also why the two stack. Teams writing EARS requirements that feed into a strongly-typed Scala codebase get compounding effects: precise requirements drive precise type signatures drive precise compiler-validated implementations. A vague requirement still produces vague code in any language, but the gap is bigger and louder in a strongly-typed system, which is itself a forcing function on going back and fixing the requirement. Teams writing vague requirements and feeding them to a loosely-typed codebase compound in the other direction: the ambiguity at every layer multiplies, the AI fills each layer's gaps with confident-sounding guesses, and the result is plausible-looking code that is individually reasonable at every step and collectively wrong by the time it reaches production.

There is no single move that solves AI-assisted development. There is a stack of disciplines that make AI a useful collaborator: structured requirements at the top, typed effects in the middle, compiler-validated changes at the bottom. SDD addresses none of these. EARS addresses one. Strong types and pure functions address another. The teams that win are the ones that adopt all of them and stop looking for the methodology that promises to skip the discipline.

Why This Keeps Mattering (And Why Teams Keep Getting It Wrong)

The pattern this fits is not specific to SDD. Every methodology pitched as solving the requirements problem has been a partial solution that mostly relocated the problem. Each was sold as a transformation. Each delivered a fraction of what it promised. Teams adopted them, integrated them, generated artifacts in their formats, and continued shipping software that did not do quite what anyone expected. The systems kind of, sort of worked, enough to ship, not enough to be right, and everyone agreed to call that success.

SDD is shaping up to be the next entry in this sequence, and the cost of repeating the cycle is real. Organizations are making decisions about tooling, training, and process based on the current SDD pitch. Some of those decisions will be expensive. The ones that hold up are the ones built on what actually works at the layer where requirements problems live: precise sentences, explicit scope, and structured review augmented by models that have something structured to review.

That is not a transformation. It is a discipline. The teams that adopt it will quietly outperform the teams that buy the new methodology, in the same way that the teams that wrote good Jira tickets quietly outperformed the teams that bought the agile coaching package, and in the same way that the teams that modeled their domain quietly outperformed the teams that bought into DDD as a brand.

The pattern is the point. We keep building new flawed systems that kind of, sort of work, and then naming the next flawed system after the part of the previous one that disappointed us. SDD will not be the last of these. There will be a successor methodology in two or three years that promises to fix what SDD did not, and it will also relocate the problem rather than solve it, because the requirements problem is not a tooling problem. It is a thinking problem. The tools that work are the ones that force the thinking. Everything else is just where you put the file.

The unglamorous toolchain keeps winning. It would help if we stopped being surprised by that.

Scala Teams builds and extends engineering teams for companies running Scala in production. If you're investing in tighter requirements and AI-assisted development, talk to us about putting the right engineers behind it.

Frequently Asked Questions

What is EARS (Easy Approach to Requirements Syntax)?

EARS is a writing standard developed at Rolls-Royce that reduces requirements to five sentence patterns: ubiquitous, event-driven, state-driven, optional feature, and unwanted behavior. Each pattern forces the writer to be explicit about triggers, conditions, and failure modes. It is not a methodology or a tool. It is a syntactic discipline that makes underspecification visible at the sentence level, which is where the requirements problem actually lives.

How is EARS different from Spec-Driven Development?

Spec-Driven Development changes where requirements are stored and how they drive code generation. EARS changes how requirements are written. SDD relocates the problem; EARS attacks it. A team using SDD with poorly written specs will generate poorly specified systems faster. A team using EARS will write requirements that are precise enough to expose gaps before any code is generated, whether by AI or by hand.

Why do LLMs produce better gap analysis on structured requirements?

A vague requirement gives a model nothing to anchor against, so it responds with generic advice. A requirement written in EARS gives the model an enumerable surface: specific triggers, states, and failure modes it can compare against similar systems in its training data. The structure is what makes coverage analysis tractable. The same model, given the same system, will surface meaningfully different and more specific gaps when the requirements are structured than when they are prose.

What is the scope trap in LLM-assisted requirements review?

When a spec lacks an explicit scope statement, a model compares it against an arbitrarily large reference architecture and surfaces gaps relative to a production system far larger than what was intended. This causes teams to expand scope under the guise of completing requirements. The fix is to write explicit scope statements first, in EARS-style "shall" and "shall not" constructions, and to prompt the model to flag only gaps relative to the stated scope. Scope expansion suggestions should be surfaced separately, requiring explicit approval.