Is Scala Good for Data Science? Programs and Libraries Explained

If you’re searching for which Scala program is used for data science, you’re likely comparing tools before committing to a stack. Scala does not dominate beginner data science tutorials, but it plays a major role in large-scale data systems. The answer depends on whether you are experimenting locally, building distributed pipelines, or deploying models into production infrastructure.

In most enterprise environments, one program stands out: Apache Spark.

But Spark is only part of the story. To fully answer how Scala is used in data science, and why teams choose it, we need to look at scale, ecosystem tradeoffs, and real-world workflows.

This article explains how Scala fits into modern data science workflows and where it provides leverage compared to Python. Key points include:

- Apache Spark is the primary Scala-based platform used for large-scale data processing and machine learning

- Scala plays a central role in distributed, production-grade, and JVM-integrated data systems

- Python dominates experimentation and notebook-driven exploration, while Scala strengthens production pipelines

- Spark MLlib leads the Scala ecosystem, with Saddle, Spark NLP, and JVM deep learning tools supporting specialized use cases

- Scala becomes most valuable when data science must scale across clusters and integrate with backend services

If your workflow centers on experimentation, Python remains the default. If your priority is distributed systems, strong typing, and direct integration with JVM infrastructure, Scala becomes a strategic choice.

Is Scala Used in Data Science?

Yes, Scala is used in data science. However, it is used differently from Python or R.

Scala is less common in notebook-first experimentation. It is more common in:

Distributed data processing systems

Production machine learning pipelines

Data engineering-heavy workflows

JVM-based backend platforms

In many companies, data scientists prototype in Python and engineers productionalize the workflow in Scala. This is especially true when datasets grow large or when models must integrate directly with backend services.

How Is Scala Used?

When someone asks directly which Scala program is used for data science, the practical answer is Apache Spark.

Spark was originally built in Scala and remains tightly integrated with it. While Spark also supports Python and R APIs, Scala is its native language. That gives Scala strong performance characteristics and complete API coverage.

Spark supports:

Distributed batch processing

Streaming data pipelines

SQL-style analytics

Machine learning

Cluster execution

You can run Spark on a laptop in local mode. The same application can then be submitted to a distributed cluster without rewriting core logic. This ability to move from development to large-scale execution with minimal friction is one of Scala’s biggest advantages in data-heavy systems.

If your data fits comfortably in memory on a single machine, Spark may be unnecessary. If your data spans millions or billions of records, Spark becomes practical. For large-scale analytics, Spark is the default answer.

Machine Learning in Scala: MLlib

Machine learning in Scala typically happens inside Spark. Spark includes Apache Spark MLlib, which provides distributed implementations of common algorithms.

MLlib focuses on scalable, production-oriented machine learning rather than experimental research models.

It includes support for:

Classification

Regression

Clustering

Dimensionality reduction

Feature engineering

Pipeline construction

MLlib integrates directly with Spark DataFrames, making it suitable for structured data at scale. Because it runs within Spark’s distributed engine, models can train across clusters without custom infrastructure work.

Python vs Scala for Data Science

The conversation around python vs scala for data science often frames the two languages as direct competitors. In practice, they solve different problems inside modern data teams.

Python dominates exploration and experimentation. Scala dominates distributed data systems and high-performance Spark jobs.

Instead of choosing one in isolation, many organizations use both.

Here’s a structured comparison:

What This Means in Practice

Python is optimized for experimentation.

Data scientists can load data quickly, test models, visualize outputs, and iterate fast. Its ecosystem includes mature ML and deep learning libraries, making it a natural first choice for research-heavy workflows.

Scala is optimized for scale and infrastructure alignment.

It integrates natively with Spark, runs on the JVM, and compiles to bytecode. This results in stronger performance characteristics and compile-time type guarantees. In large distributed environments, those characteristics matter.

Typing and Reliability

Python’s dynamic typing allows flexibility during exploration. However, type errors appear at runtime. Scala’s static typing enforces structure at compile time. In complex data pipelines, this reduces runtime failures and makes transformations more explicit.

For small scripts, dynamic typing may feel faster. For multi-stage distributed systems, static typing often improves maintainability.

Performance Considerations

Python is interpreted and generally slower for compute-heavy workloads unless backed by optimized libraries. Scala compiles to JVM bytecode. In Spark environments, Scala typically performs better than PySpark because it avoids cross-language overhead. When running large Spark jobs, Scala often delivers measurable performance gains.

Ecosystem Tradeoffs

Python’s ecosystem remains larger for pure machine learning and deep learning. Libraries such as TensorFlow and Scikit-learn dominate research and experimentation.

Scala’s ecosystem is smaller for standalone ML, but it excels in distributed data engineering through Apache Spark and its native APIs.

If your workflow centers on large distributed datasets, Scala gains relevance. If your workflow centers on experimental modeling, Python often remains simpler.

| Python | Scala | When to Choose | |

|---|---|---|---|

| Primary Strength | Notebook-driven experimentation and rapid model iteration | Distributed systems and production-grade data pipelines | Use Python for research and prototyping. Use Scala for cluster-scale systems. |

| Data Processing | Pandas-based local analysis and PySpark for distributed jobs | Native integration with Apache Spark and JVM-based big data tools | Choose Scala when Spark performance and tight JVM integration matter. |

| Machine Learning Ecosystem | scikit-learn, TensorFlow, PyTorch, and broad community support | Spark MLlib, Spark NLP, and JVM deep learning libraries | Python for model diversity. Scala for Spark-native ML pipelines. |

| Performance at Scale | Relies on C-backed libraries or distributed frameworks | Runs on the JVM with strong performance in distributed environments | Scala for long-running distributed workloads and backend integration. |

| Type System | Dynamic typing enables rapid coding and flexible experimentation | Static typing with compile-time checks and functional modeling | Scala for large teams and systems where refactor safety matters. |

| Learning Curve | Lower barrier to entry and broad adoption across industries | Steeper learning curve with functional programming concepts | Python for quick onboarding. Scala for teams with JVM experience. |

| Backend Integration | Requires APIs or service layers for JVM integration | Direct interoperability with Java and existing JVM services | Scala when data science must integrate with backend microservices. |

| Workflow Stage Fit | Exploration, visualization, and early-stage modeling | Production deployment, streaming, and distributed processing | Many teams use Python for research and Scala for production pipelines. |

Why Use Scala for Data Science?

If Python offers more libraries, why use Scala for data science? The answer usually relates to infrastructure and maintainability. Scala allows teams to unify data processing and backend services under one language. It reduces friction between data engineering and application engineering.

Teams often choose Scala when:

They already operate Spark clusters

Their backend stack runs on the JVM

They require compile-time guarantees for complex pipelines

They need consistent performance at scale

Scala’s type system can model transformations explicitly. Case classes and algebraic data types provide structure to datasets. This can reduce runtime surprises in large pipelines.

Scala is less focused on exploratory convenience and more on long-term system stability.

Read More: The Role of Scala in the Future of Data Science and Machine Learning

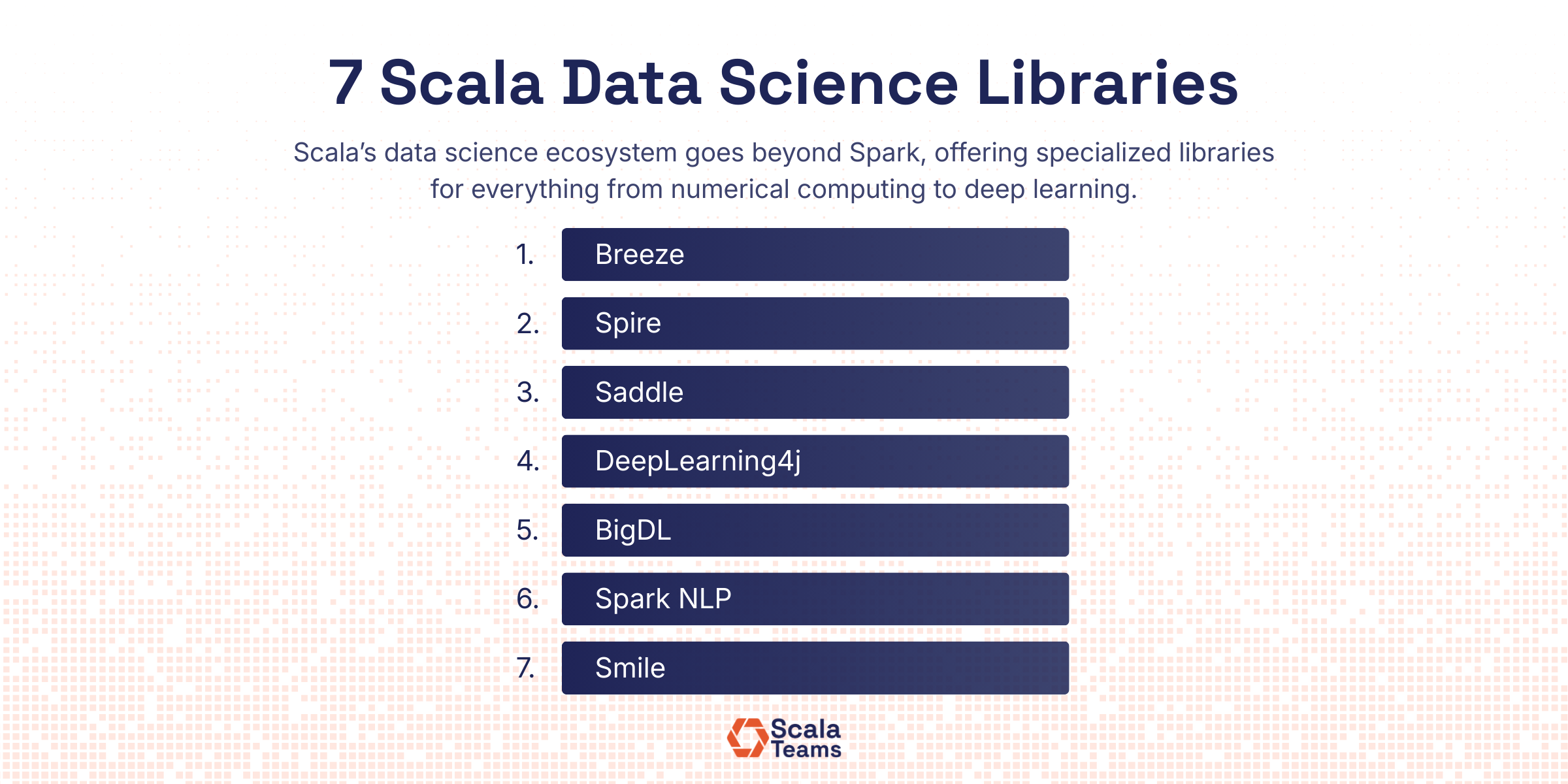

Scala Data Science Libraries

While Spark is the dominant answer, there are additional Scala data science libraries for specific needs. These tools vary in maturity and scope, but they expand Scala’s capabilities beyond distributed processing.

1. Numerical Computing

Spire

Focuses on precise and generic numeric computation using Scala’s type system. It fits use cases where numeric control, compile-time guarantees, and performance matter.

2. DataFrame-Style Manipulation

Saddle

Offers indexed collections and structured data manipulation similar to pandas. It works well for tabular data processing inside JVM applications when Spark is not required.

3. Deep Learning and Advanced Machine Learning

DeepLearning4j

Provides neural network capabilities within the JVM ecosystem. It supports integration with existing Java and Scala systems.BigDL

Integrates deep learning directly with Spark. It enables distributed training across clusters without leaving the JVM stack.

4. Natural Language Processing

Spark NLP

Built on Spark for scalable NLP pipelines. It is widely used in enterprise text processing and large document workflows.

5. Visualization and Analytics

Smile

Includes machine learning algorithms and basic visualization capabilities. It supports classical ML workflows and lightweight analytics inside JVM environments.

What a Scala-Based Data Science Pipeline Looks Like

To understand how Scala works in practice, consider a simple Spark-based workflow.

A basic Scala data science example might follow this sequence:

Load a CSV dataset into a Spark DataFrame.

Clean and transform columns using functional transformations.

Assemble features into vectors using Spark’s feature utilities.

Train a logistic regression model using MLlib.

Evaluate model accuracy.

Save the trained model to storage.

The same application can run locally for development and then execute on a distributed cluster without structural changes. This portability is one of Scala’s strongest advantages in enterprise systems. It reduces the gap between experimentation and deployment.

Do You Always Need a Library?

Not every data workflow requires a specialized framework.

Scala’s standard library provides:

Immutable collections

Functional transformations such as map and reduce

Case classes for structured data modeling

Pattern matching for transformation logic

For workflows focused on structured transformations rather than advanced statistical modeling, Scala alone can handle much of the pipeline logic.

In some systems, Spark is used for data movement and aggregation while core business logic remains pure Scala code.

When Does Scala Make Sense for Data Science?

If your question is simply which Scala program is used for data science, the answer is clear: Apache Spark.

But the more useful question is when Scala makes sense in a data workflow.

Scala is used in data science when systems need to scale, run on distributed infrastructure, and integrate directly with backend services.

When comparing Python and Scala, the distinction usually comes down to stage:

Python fits early experimentation, visualization, and research-heavy modeling.

Scala fits large-scale Spark jobs, structured pipelines, and long-term production systems.

Spark and MLlib are the dominant options for Scala’s data science libraries. Meanwhile, tools like Saddle and Spark NLP, and JVM-based deep learning libraries support more specific use cases. The ecosystem is smaller than Python’s, but it is aligned with distributed data engineering.

From a practical standpoint, an example of Scala being used for data science typically looks like this: ingest data with Spark, transform it using functional patterns, train models with MLlib, and deploy the result into a distributed environment without changing languages.

The decision ultimately depends on scale and architecture. If your data science work must operate across clusters and live inside production systems, Scala is often a natural fit.

Curious if Scala is the right fit for your goals? Book a call with Scala Teams to see how we can help.